Lesson 10: Projections — Building Pre-Computed Read Models

What We’re Building Today

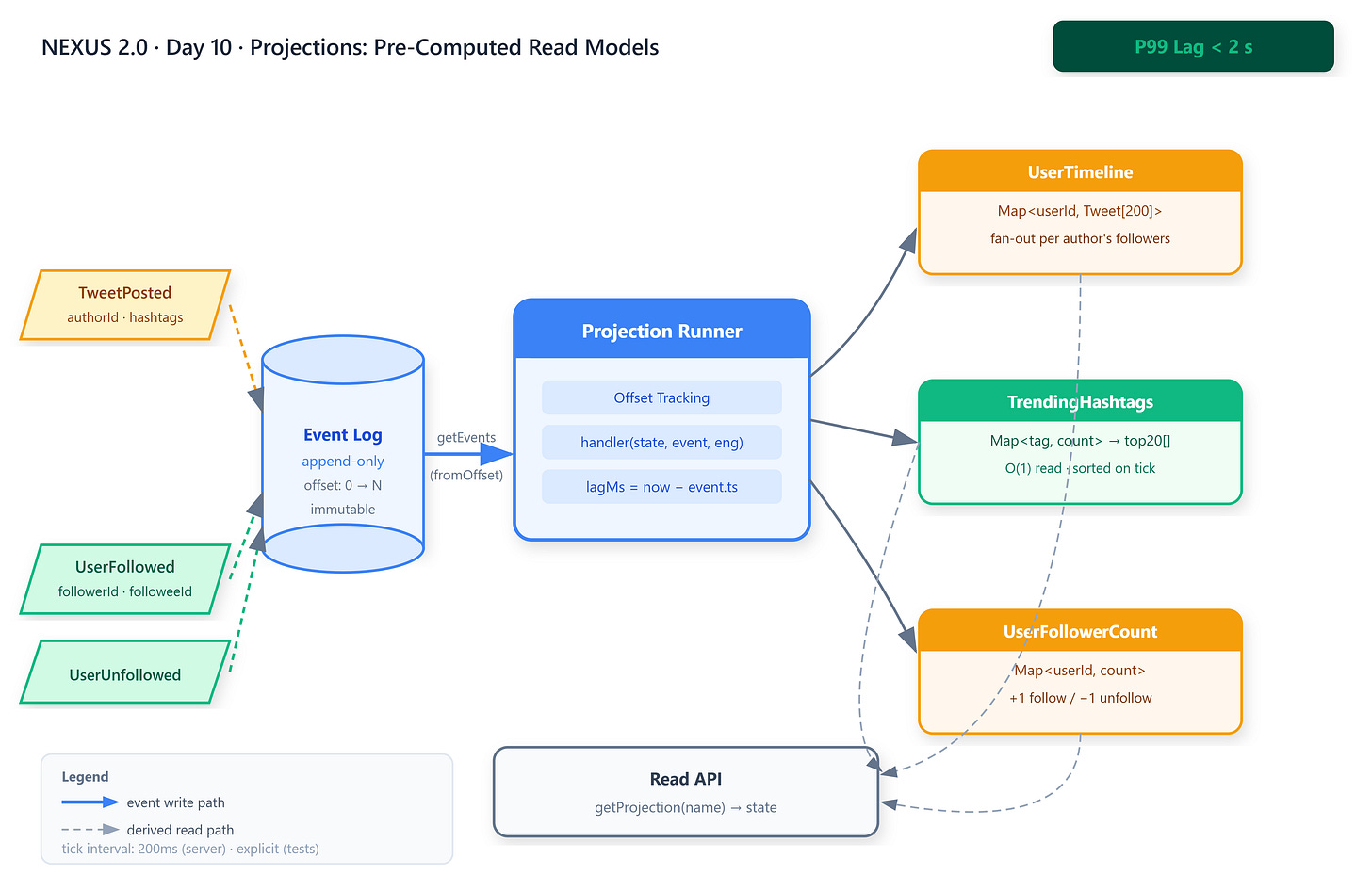

Three background projection consumers —

UserTimeline,TrendingHashtags,UserFollowerCount— that process events from the NEXUS event log and maintain always-current derived stateAn offset-tracking engine that guarantees each event is processed exactly once per projection, making consumers resumable and measurably behind by at most a quantified lag

A lag measurement tool that emits

{ projectionName: lagMs }after every tick — the operational heartbeat that proves the read path stays within 2 seconds of the write path

Why This Matters

LinkedIn’s Galene search service (2012) exposed a structural problem hiding inside CQRS: write-side separation doesn’t automatically give you a fast read side. Before projections, reconstructing a member profile for search required joining endorsements, positions, skills, and connections at query time — 47 table reads per request. Median read latency was 340ms. Galene’s solution was to consume LinkedIn’s Databus change stream and pre-compute a single searchable document per member. Median dropped to 4ms. Without projections, NEXUS’s read API faces the same compounding cost: every timeline request would replay the event log from offset zero, re-aggregating O(events) work per user per request.

Core Concepts

Event Log as the Single Source of Truth

An event log is not a database — it is an immutable ledger. No row is ever updated; every state change is a new append. The consequence is radical: any derived state can be thrown away and rebuilt by replaying from offset zero. Projections exploit this guarantee. They are not authoritative stores; they are caches that happen to be correct. The event log is what’s true. The projection is what’s fast. In NEXUS, appendEvent(eventType, payload) writes to this log. Everything downstream is derived.

The production tradeoff: write amplification is eliminated (one append, not multiple table updates), but read freshness becomes probabilistic — lag, not zero. Operators must decide what lag is acceptable and instrument it.

Offset-Based Consumption

Every event carries a monotonically increasing offset — its position in the log. A projection tracks lastOffset: N, meaning “I have processed all events up to and including position N.” On each tick, the runner fetches events[N..], processes them, then advances lastOffset to N + processed.length. This is the same mechanism Redpanda (and Kafka before it) uses internally for consumer groups.

The critical invariant: lastOffset is local state owned by the projection, not the log. If you reset it to zero, you replay from the beginning. If you checkpoint it to disk, you survive process restarts without reprocessing. In NEXUS’s in-process engine, lastOffset lives in memory. In the ExternalEngine, it maps to a consumer group offset committed to Redpanda.

Projection State as Precomputed Memoization

A projection’s state is the memoized answer to a specific read query.

UserTimelineanswers “what are the last 200 posts visible to this user?” without touching the event log at read time.TrendingHashtagsanswers “what are the top 20 tags right now?” in a single in-memory lookup. The state machine is simple:handler(currentState, newEvent, engine) → mutates currentState. Handlers are pure in the functional sense — same state + same event = same output — which makes them testable in isolation.

The tradeoff is memory. Projections trade compute (at read time) for memory (pre-computed state). A UserTimeline capped at 200 entries per user with 50K users consumes roughly 200MB at 20 bytes per entry — acceptable. An uncapped projection of every raw event is a memory leak.

Lag as a First-Class SLO

Lag is the gap between when an event was written and when it was reflected in projection state. It is the contractual SLO between the write path and read path. A lag of 0ms means synchronous projection — the write doesn’t return until all projections update, which destroys write throughput. A lag of 2s means the read path may show state up to 2 seconds stale — acceptable for timelines and trending, unacceptable for follower counts shown during a live unfollow UI flow.

In NEXUS, getLag() returns { projectionName: lastProcessedAt - lastEvent.timestamp }. The projection runner exposes this as a metrics endpoint. When lag exceeds threshold, the on-call alarm fires — not because data is wrong, but because the read/write contract is at risk.