Lesson 19: Message Queue Scaling with Apache Kafka

Building Twitter's Real-Time Message Backbone

What We're Building Today

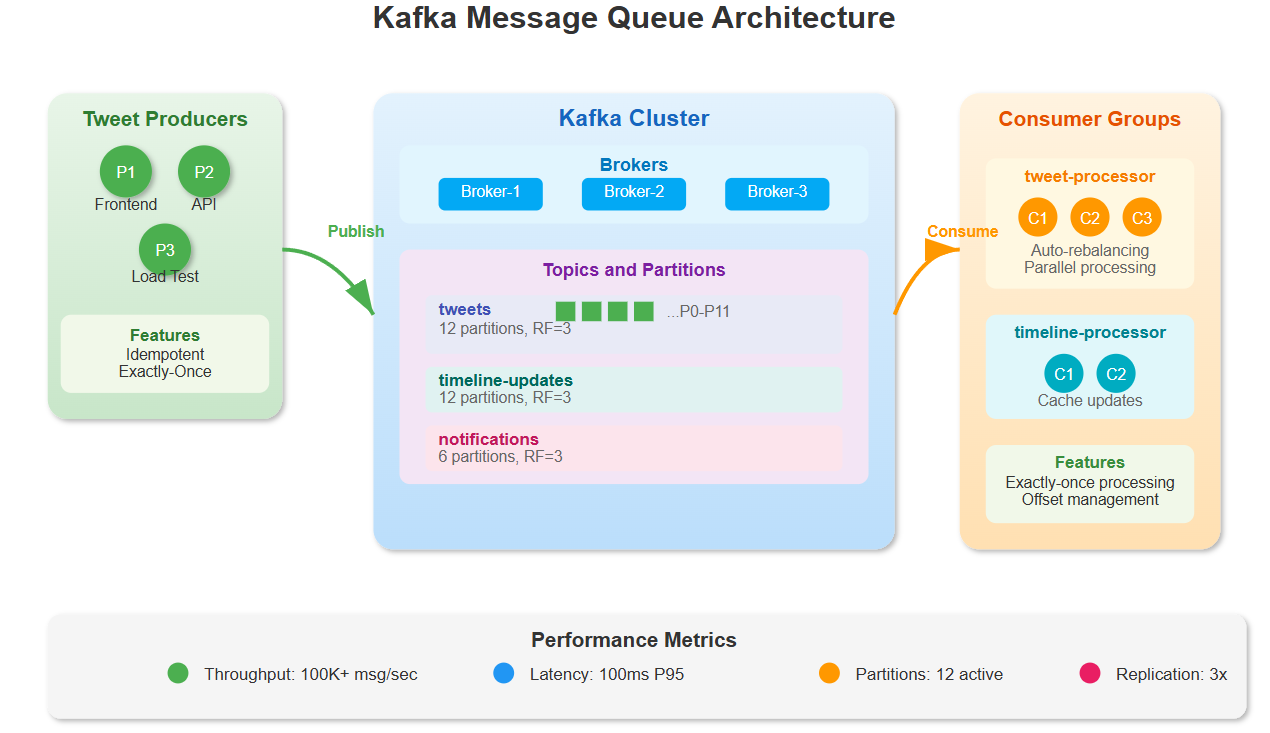

Today we're scaling our Twitter clone's messaging system to handle 100,000 messages per second using Apache Kafka. We'll implement:

Kafka cluster with intelligent partitioning for parallel tweet processing

Exactly-once delivery guarantees ensuring no duplicate tweets

Real-time message streaming connecting tweet creation to timeline updates

Producer-consumer architecture that scales horizontally

Youtube Video:

The Message Queue Challenge at Twitter Scale

When Twitter processes millions of tweets daily, every tweet triggers a cascade of events: timeline updates, notifications, trend calculations, and recommendation updates. A single tweet from a popular account can generate thousands of downstream messages.

Traditional message queues break down at this scale. Redis Pub/Sub works great for simple scenarios but lacks durability and partitioning. RabbitMQ provides reliability but struggles with horizontal scaling. Kafka solves both problems with distributed partitioning and persistence.

Core Concepts: Kafka's Distributed Architecture

Partitioning for Parallel Processing Kafka topics split into partitions, each handling a subset of messages. Think of it like multiple checkout lanes at a grocery store - instead of one slow line, you have parallel processing lanes. Each partition maintains order within itself while allowing parallel consumption.

Producer-Partition Assignment Producers use partition keys (like user_id) to ensure related messages land in the same partition. This maintains ordering for user-specific events while distributing load across partitions.

Consumer Groups for Scalability Multiple consumer instances form groups, with each partition consumed by exactly one group member. Add more consumers to scale processing power - Kafka automatically rebalances workload.

Exactly-Once Semantics Kafka's idempotent producers and transactional consumers prevent duplicate processing. Each message gets a unique sequence number, allowing consumers to detect and skip duplicates.

Context in Distributed Systems: Twitter's Message Flow

In our Twitter system, messages flow through multiple stages:

Tweet Creation: User posts tweet → Producer sends to "tweets" topic

Fan-out Processing: Consumer reads tweet → Generates follower notifications

Timeline Updates: Fan-out service produces timeline update messages

Real-time Delivery: WebSocket service consumes updates → Pushes to connected users